18 Comparing Two Groups

Examples and Introduction

In Part 1, we estimated a single population mean using a sample mean. Now we compare means between two groups. This is a fundamental statistical task: estimating the difference in means between subpopulations.

Black vs. non-Black Voters in GA

$$

\[ \small{ \begin{array}{r|rr|rr|r|rr|rrrrr} \text{call} & 1 & & 2 & & \dots & 625 & & & & & & \\ \text{question} & W_1 & Y_1 & W_2 & Y_2 & \dots & X_{625} & Y_{625} & \overline{X}_{625} & \overline{Y}_{625} &\frac{\sum_{i:W_i=0} Y_i}{\sum_{i:W_i=0} 1} & \frac{\sum_{i:W_i=1} Y_i}{\sum_{i:W_i=1} 1} & \text{difference} \\ \text{outcome} & \underset{\textcolor{gray}{x_{869369}}}{\textcolor[RGB]{248,118,109}{0}} & \underset{\textcolor{gray}{y_{869369}}}{\textcolor[RGB]{248,118,109}{1}} & \underset{\textcolor{gray}{x_{4428455}}}{\textcolor[RGB]{0,191,196}{1}} & \underset{\textcolor{gray}{y_{4428455}}}{\textcolor[RGB]{0,191,196}{1}} & \dots & \underset{\textcolor{gray}{x_{1268868}}}{\textcolor[RGB]{248,118,109}{0}} & \underset{\textcolor{gray}{y_{1268868}}}{\textcolor[RGB]{248,118,109}{1}} & 0.28 & 0.68 & \textcolor[RGB]{248,118,109}{0.68} & \textcolor[RGB]{0,191,196}{0.69} & 0.01 \\ \end{array} } \]

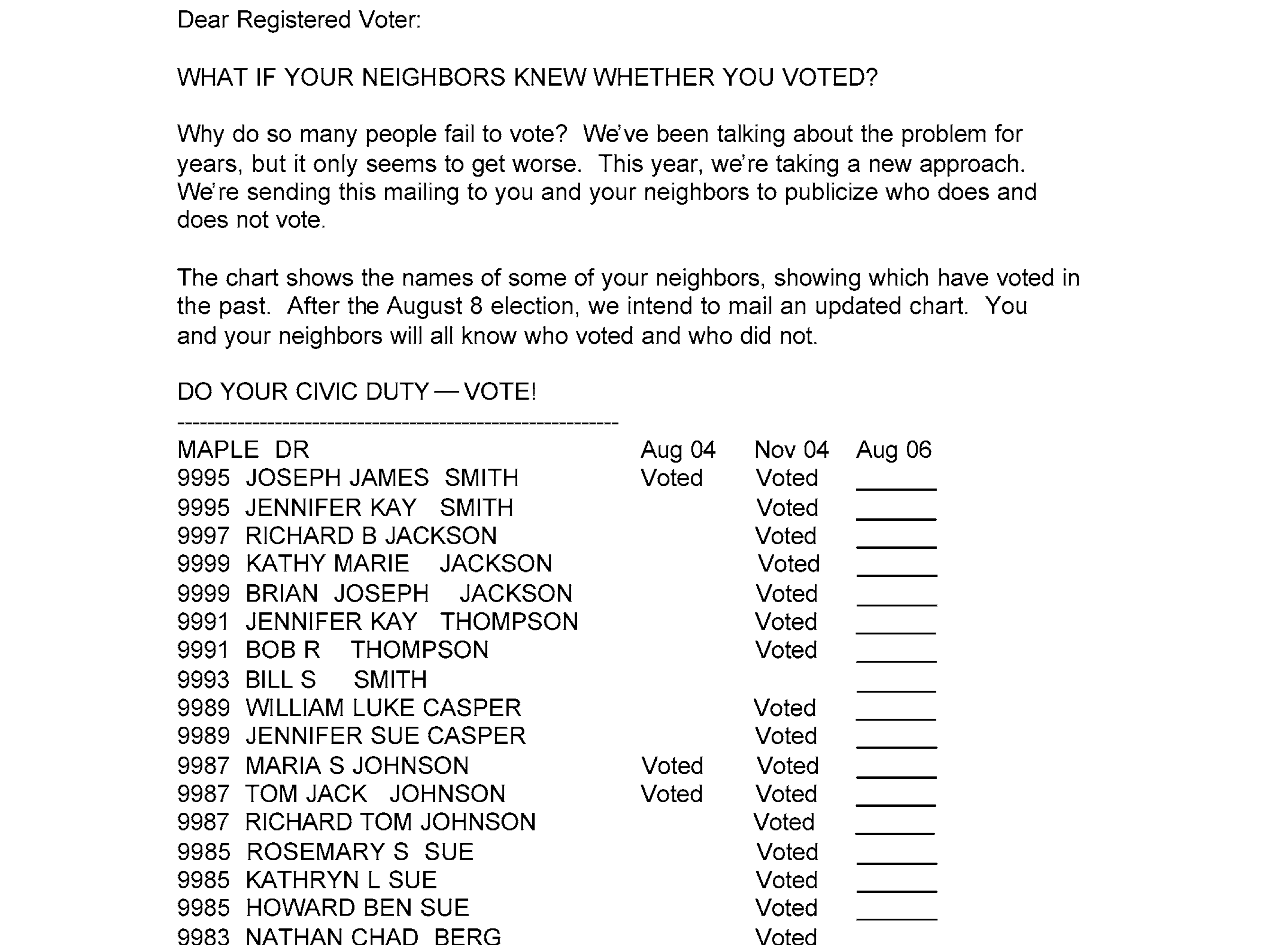

Recipients of Threatening Letters vs. Others in MI

- The green dots are people who received a letter pressuring them to vote. Figure 24.1.

- The red dots are people who didn’t.

Residents With vs. Without 4-Year Degrees in CA

| income | education | county | |

|---|---|---|---|

| 1 | $55k | 13 | orange |

| 2 | $25k | 13 | LA |

| 3 | $44k | 16 | san joaquin |

| 4 | $22k | 14 | orange |

| ⋮ | |||

| 2271 | $150k | 16 | stanislaus |

- In these examples, our sample was drawn with replacement from some population. Or we’re pretending it was.

- In this one, it’s the population of all California residents between 25 and 35 with at least an 8th grade education.

The locations shown on the map are made up. They’re not the actual locations of the people in the sample.

The survey includes some location information for some people, but as you can see in the table, not for everyone.

Population

| income | education | county | |

|---|---|---|---|

| 1 | $22k | 18 | unknown |

| 2 | $0k | 16 | solano |

| 3 | $98k | 16 | LA |

| ⋮ | |||

| 5677500 | $116k | 18 | unknown |

Sample

| income | education | county | |

|---|---|---|---|

| 1 | $55k | 13 | orange |

| 2 | $25k | 13 | LA |

| 3 | $44k | 16 | san joaquin |

| ⋮ | |||

| 2271 | $150k | 16 | stanislaus |

For illustration, I’ve made up a fake population that looks like a bigger version of the sample.

Our Estimator and Target

Estimation Target

\[ \begin{aligned} \mu(1) - \mu(0) \qfor \mu(x) &= \frac{1}{m_x}\sum_{j:x_j = x } y_j \\ \qqtext{ where } \quad m_x &= \sum_{j:x_j=x} 1 \end{aligned} \]

Estimator

\[ \begin{aligned} \hat\mu(1) - \hat\mu(0) \qfor \hat\mu(x) &= \frac{1}{N_x}\sum_{i:W_i=x} Y_i \\ \qqtext{ where } \quad N_x &= \sum_{i:W_i=x} 1. \end{aligned} \]

Is This Estimator Good?

- We want to know about our estimator’s …

- bias. That’s the location of the sampling distribution relative to the estimation target.

- standard deviation. Roughly speaking, that determines the width of our 95% confidence intervals.

Two Ways to Get Two Groups

Before we answer that question, we need to think about how we got our two groups. There are (at least) two different sampling schemes that give us data like this.

Stratified Sampling. We decide in advance how many people to sample from each group. We sample \(n_0\) people from the no-degree subpopulation and \(n_1\) people from the degree subpopulation, independently.

Ordinary Sampling. We sample \(n\) people from the whole population. We get whoever we get. The number of people in each group, \(N_0\) and \(N_1\), is random.

These are different. In stratified sampling, the group sizes \(n_0\) and \(n_1\) are fixed numbers we choose. In ordinary sampling, the group sizes \(N_0\) and \(N_1\) are random variables—we don’t know what they’ll be until we see our sample.

This matters for our analysis. Stratified sampling is simpler because we’re essentially doing two separate one-sample problems and combining them. Ordinary sampling is more complicated because we have to deal with the randomness in the group sizes.

We’ll start with stratified sampling.

Stratified Sampling

The Setup

Suppose we have two subpopulations—people without and with 4-year degrees. We sample independently from each:

- From the no-degree subpopulation: draw \(n_0\) people with replacement.

- From the degree subpopulation: draw \(n_1\) people with replacement.

Our data looks like this: \[ \begin{aligned} &\text{From subpopulation 0:} && Y_{0,1} \ldots Y_{0,n_0} && \text{all sampled uniformly from } y_1 \ldots y_{m_0} \text{ with } x_j=0 \\ &\text{From subpopulation 1:} && Y_{1,1} \ldots Y_{1,n_1} && \text{all sampled uniformly from } y_1 \ldots y_{m_1} \text{ with } x_j=1 \end{aligned} \]

The key feature: \(n_0\) and \(n_1\) are fixed numbers that we chose in advance.

Target and Estimator

Our target is still the difference in subpopulation means. \[ \mu(1) - \mu(0) \qfor \mu(x) = \frac{1}{m_x}\sum_{j:x_j=x} y_j \]

Our estimator is the difference in subsample means. \[ \hat\mu(1) - \hat\mu(0) \qfor \hat\mu(x) = \frac{1}{n_x}\sum_{i=1}^{n_x} Y_{x,i} \]

Notice the notation is a bit different. We’re writing \(Y_{x,i}\) for the \(i\)th observation from subpopulation \(x\), and the denominator is \(n_x\), a fixed number we chose.

Unbiasedness

This is immediate from Part 1. Each subsample mean is an unbiased estimator of its subpopulation mean. \[ \mathop{\mathrm{E}}[\hat\mu(x)] = \mu(x) \]

Why? Because \(\hat\mu(x)\) is just a sample mean of \(n_x\) observations drawn uniformly-at-random from a subpopulation with mean \(\mu(x)\). That’s exactly the setup from Part 1.

And by linearity: \[ \mathop{\mathrm{E}}[\hat\mu(1) - \hat\mu(0)] = \mathop{\mathrm{E}}[\hat\mu(1)] - \mathop{\mathrm{E}}[\hat\mu(0)] = \mu(1) - \mu(0) \]

Variance

The variance is equally straightforward. From Part 1, we know: \[ \mathop{\mathrm{\mathop{\mathrm{V}}}}[\hat\mu(x)] = \frac{\sigma^2(x)}{n_x} \] where \(\sigma^2(x)\) is the variance of the subpopulation with \(W=w\).

For the difference, we use the fact that the two subsample means are independent—we sampled from the two subpopulations separately. When random variables are independent, the variance of their difference is the sum of their variances: \[ \mathop{\mathrm{\mathop{\mathrm{V}}}}[\hat\mu(1) - \hat\mu(0)] = \mathop{\mathrm{\mathop{\mathrm{V}}}}[\hat\mu(1)] + \mathop{\mathrm{\mathop{\mathrm{V}}}}[\hat\mu(0)] = \frac{\sigma^2(1)}{n_1} + \frac{\sigma^2(0)}{n_0} \]

That’s it. No conditioning tricks, no iterated expectations. Just two separate one-sample problems combined.

Estimation and Confidence Intervals

We estimate the variance by plugging in subsample variances: \[ \widehat{\mathop{\mathrm{\mathop{\mathrm{V}}}}}[\hat\mu(1) - \hat\mu(0)] = \frac{\hat\sigma^2(1)}{n_1} + \frac{\hat\sigma^2(0)}{n_0} \]

And our confidence interval is: \[ \hat\mu(1) - \hat\mu(0) \pm 1.96 \sqrt{\frac{\hat\sigma^2(1)}{n_1} + \frac{\hat\sigma^2(0)}{n_0}} \]

Summary

Stratified sampling is simple because:

- Group sizes \(n_0\) and \(n_1\) are fixed, not random.

- The two subsamples are independent.

- Everything reduces to two separate applications of Part 1’s results.

But stratified sampling requires us to decide in advance how many people to sample from each group. That’s not always possible or practical. Often we just sample from the whole population and see who we get. That’s ordinary sampling, and it’s more complicated.

The Forgetting Heuristic

What if our population has more than two groups? Suppose instead of just degree vs. no-degree, we had data on years of education: 8, 9, 10, …, 16, 18, 20. That’s many groups. But often we want to compare just two of them.

Here’s the key insight: you can “forget” the other groups exist. If you want to compare people with 16 years of education (4-year degree) to people with 12 years (high school diploma), just ignore everyone else. Extract the two groups you care about and apply exactly what we’ve developed here.

Why does this work? Because the math we did for comparing two groups never assumed they were the only two groups. We proved:

- The difference in subsample means is unbiased for the difference in subpopulation means.

- The variance of this difference is \(\frac{\sigma^2(1)}{n_1} + \frac{\sigma^2(0)}{n_0}\).

None of this depended on there being no other groups. The existence of people with 10 or 14 years of education doesn’t affect the sampling distribution of the comparison between the 12-year and 16-year groups. You can always “coarsen” a many-group comparison into a two-group one by ignoring the groups you’re not interested in.

This is a useful heuristic. When faced with a complex comparison involving many groups, ask yourself: can I reframe this as a two-group comparison by “forgetting” some groups? If so, everything from this lecture applies directly.

Ordinary Sampling

Now suppose we sample \(n\) people from the whole population—a population where each person has both a covariate \(x_j\) (degree status) and an outcome \(y_j\) (income). We get whoever we get.

The data looks like this: \[ (W_1, Y_1) \ldots (W_n, Y_n) \] Each pair \((W_i, Y_i)\) is drawn uniformly-at-random from the population of pairs \((x_1, y_1) \ldots (x_m, y_m)\).

The group sizes are now random: \[ N_x = \sum_{i=1}^n 1_{=x}(W_i) \] This is the number of sampled people with \(W_i=x\). It’s a random variable, not a fixed number.

This randomness complicates everything. To handle it, we need some probability tools.

Probability Review

Population Means and Variances as Expectations

- If a random variable \(Y\) is equally likely to be any member of a population \(y_1 \ldots y_m\), …

- … then its expectation is the population mean \(\mu=\frac{1}{m}\sum_{j=1}^m y_j\).

- … and its variance is the population variance \(\sigma^2 = \frac{1}{m}\sum_{j=1}^m (y_j - \mu)^2\).

- Terminology. When each of our observations \(Y_i\) is like this, we’re sampling uniformly-at-random.

\[ \begin{aligned} \mathop{\mathrm{E}}[Y] &= \sum_{j=1}^m \underset{\text{response}}{y_j} \times \underset{\text{probability}}{\frac{1}{m}} = \frac{1}{m}\sum_{j=1}^m y_j = \mu \\ \mathop{\mathrm{E}}[(Y-\mathop{\mathrm{E}}[Y])^2] &= \sum_{j=1}^m \underset{\text{deviation}^2}{(y_j - \mu)^2} \times \underset{\text{probability}}{\frac{1}{m}} = \frac{1}{m}\sum_{j=1}^m (y_j - \mu)^2 = \sigma^2 \end{aligned} \]

Subpopulations and Conditioning

- Conditioning is, in effect, a way of thinking about sampling as a two-stage process.

- First, we choose the color of our dot, i.e., the value of \(W_i\), according to it frequency in the population.

- Then, we choose a specific one of those dots, i.e. \(J_i\), from those with that color—with equal probability.

- Because this is just a way of thinking, each person still gets chosen with probability \(1/m\).

\[ \begin{aligned} P(J_i=j) = \begin{cases} \frac{m_{green}}{m} \ \times \frac{1}{m_{green}} \ = \ \frac{1}{m} & \text{if the $j$th dot is green ($x_j=1$) } \\ \frac{m_{red}}{m} \ \times \frac{1}{m_{red}} \ = \ \frac{1}{m} & \text{otherwise} \end{cases} \end{aligned} \]

- The first stage is ‘a coin flip’ that decides whether we roll the ‘red die’ or the ‘green die’ in the second.

- The Conditional Probability of \(Y_i\) is the probability resulting from the second stage.

- \(P(Y_i=y \mid W_i=1)\) is the probability distribution of \(Y_i\) when we’re ‘rolling the green die’.

- \(P(Y_i=y \mid W_i=0)\) is the probability distribution of \(Y_i\) when we’re ‘rolling the red die’.

- The Conditional Expectation of \(Y_i\) is the ‘second stage expected value’ in the same sense.

- \(\mathop{\mathrm{E}}[Y_i \mid W_i=1]\) is the expected value of \(Y_i\) when we’re ‘rolling the green die’.

- It’s the mean value \(\mu(1)\) of \(y_j\) in the subpopulation drawn as little green dots.

- \(\mathop{\mathrm{E}}[Y_i \mid W_i=0]\) is the expected value of \(Y_i\) when we’re ‘rolling the red die’.

- It’s the mean value \(\mu(0)\) of \(y_j\) in the subpopulation drawn as little red dots.

- The Conditional Variance of \(Y_i\) is like this too. It’s the ‘second stage variance.’

- \(\mathop{\mathrm{\mathop{\mathrm{V}}}}[Y_i \mid W_i=1]\) is the variance of \(Y_i\) when we’re ‘rolling the green die’.

- It’s the variance \(\sigma^2(1)\) of \(y_j\) in the subpopulation drawn as little green dots.

- \(\mathop{\mathrm{\mathop{\mathrm{V}}}}[Y_i \mid W_i=0]\) is the variance of \(Y_i\) when we’re ‘rolling the red die’.

- It’s the variance \(\sigma^2(0)\) of \(y_j\) in the subpopulation drawn as little red dots.

About Conditional Expectations and Variances

- The conditional expectation function \(\mu(x)=E[Y \mid W=x]\).

- \(\mu\) is a function; evaluated at \(x\), it’s a number. It’s not random.

- It’s the mean of the subpopulation of people with \(W=w\).

- The conditional expectation \(\mu(W)=E[Y \mid W]\).

- \(\mu(W)\) is the mean of a random subpopulation of people.

- It’s the conditional expectation function evaluated at the random variable \(X\).

- The conditional variance function \(\sigma^2(x)=E[\{Y - \mu(x)\}^2 \mid W=x]\).

- \(\sigma^2\) is a function; evaluated at \(x\), it’s a number. It’s not random.

- It’s the variance of the subpopulation of people with \(W=w\).

- The conditional variance \(\sigma^2(W)=E[\{Y - \mu(W)\}^2 \mid W]\).

- \(\sigma^2(W)\) is the variance of a random subpopulation of people.

- It’s the conditional variance function evaluated at the random variable \(X\).

- This is like what happens with conditional expectations because it is one.

- It’s the conditional expectation of some random variable. What random variable?

- It’s the conditional expectation of the square of \(\textcolor[RGB]{0,0,255}{Y-\mu(W)}\), a conditionally centered version of \(Y\).

\[ \mathop{\mathrm{\mathop{\mathrm{V}}}}[Y \mid W] = \mathop{\mathrm{E}}[\textcolor[RGB]{0,0,255}{\{Y-\mu(W)\}}^2 \mid W] \qfor \mu(W) = \mathop{\mathrm{E}}[Y \mid W] \]

Working with Expectations

Linearity

\[ \begin{aligned} E ( a Y + b Z ) &= E (aY) + E (bZ) = aE(Y) + bE(Z) \\ &\text{ for random variables $Y, Z$ and numbers $a,b$ } \end{aligned} \]

Application. When we sample uniformly-at-random, the sample mean is unbiased.

\[ \begin{aligned} \mathop{\mathrm{E}}[\hat\mu] &= \mathop{\mathrm{E}}\qty[\frac1n\sum_{i=1}^n Y_i] \\ &= \frac1n\sum_{i=1}^n \mathop{\mathrm{E}}[Y_i] \\ &= \frac1n\sum_{i=1}^n \mu \\ &= \frac1n \times n \times \mu = \mu. \end{aligned} \]

Conditional Version. \[ \begin{aligned} E\{ a(W) Y + b(W) Z \mid W \} &= E\{a(W)Y \mid W\} + E\{ b(W)Z \mid W\} \\ &= a(W)E(Y \mid W) + b(W)E(Z \mid W) \\ & \text{ for random variables $W, Y, Z$ and functions $a,b$ } \end{aligned} \]

Expectations of Products Factor into Products of Expectations

\[ \color{gray} \mathop{\mathrm{E}}[\textcolor[RGB]{239,71,111}{Y}\textcolor[RGB]{17,138,178}{Z}] = \textcolor[RGB]{239,71,111}{\mathop{\mathrm{E}}[Y]}\textcolor[RGB]{17,138,178}{\mathop{\mathrm{E}}[Z]} \qqtext{when $\textcolor[RGB]{239,71,111}{Y}$ and $\textcolor[RGB]{17,138,178}{Z}$ are independent} \]

Application. When we sample with replacement, the sample mean’s variance is the population variance divided by \(n\).

\[ \color{gray} \begin{aligned} \mathop{\mathrm{\mathop{\mathrm{V}}}}[\hat\mu] &= \mathop{\mathrm{E}}\qty[ \qty{ \frac{1}{n}\sum_{i=1}^n Y_i - \mathop{\mathrm{E}}\qty[ \frac{1}{n}\sum_{i=1}^n Y_i ] }^2 ] \\ &= \mathop{\mathrm{E}}\qty[ \qty{ \frac{1}{n}\sum_{i=1}^n (Y_i - \mathop{\mathrm{E}}[Y_i]) }^2 ] \\ &= \mathop{\mathrm{E}}\qty[ \qty{ \frac{1}{n}\sum_{i=1}^n Z_i }^2 ] && \text{ for } \ Z_i = Y_i - \mathop{\mathrm{E}}[Y_i] \\ &= \mathop{\mathrm{E}}\qty[ \qty{ \textcolor[RGB]{239,71,111}{\frac{1}{n}\sum_{i=1}^n Z_i }} \times \qty{\textcolor[RGB]{17,138,178}{\frac{1}{n}\sum_{j=1}^n Z_j}} ] &&\text{ with } \mathop{\mathrm{E}}[ Z_i ] = \mathop{\mathrm{E}}[ Y_i ] - \mathop{\mathrm{E}}[Y_i] = \mu - \mu = 0 \\ &= \mathop{\mathrm{E}}\qty[ \frac{1}{n^2}\textcolor[RGB]{239,71,111}{\sum_{i=1}^n} \textcolor[RGB]{17,138,178}{\sum_{j=1}^n} \textcolor[RGB]{239,71,111}{Z_i} \textcolor[RGB]{17,138,178}{Z_j} ] &&\text{ and } \ \mathop{\mathrm{E}}[ Z_i^2] = \mathop{\mathrm{E}}[ \{ Y_i - \mathop{\mathrm{E}}[Y_i] \}^2 ] = \mathop{\mathrm{\mathop{\mathrm{V}}}}[Y_i] = \sigma^2 \\ &= \frac{1}{n^2} \textcolor[RGB]{239,71,111}{\sum_{i=1}^n} \textcolor[RGB]{17,138,178}{\sum_{j=1}^n} \mathop{\mathrm{E}}\qty[\textcolor[RGB]{239,71,111}{Z_i} \textcolor[RGB]{17,138,178}{Z_j} ] \\ &= \frac{1}{n^2} \textcolor[RGB]{239,71,111}{\sum_{i=1}^n} \textcolor[RGB]{17,138,178}{\sum_{j=1}^n} \begin{cases} \mathop{\mathrm{E}}[Z_i^2]=\sigma^2 & \text{if } j=i \\ \textcolor[RGB]{239,71,111}{\mathop{\mathrm{E}}[Z_i]}\textcolor[RGB]{17,138,178}{\mathop{\mathrm{E}}[Z_j]} = 0 \times 0 & \text{if } j\neq i \end{cases} \\ &= \frac{1}{n^2} \textcolor[RGB]{239,71,111}{\sum_{i=1}^n} \sigma^2 = \frac{1}{n^2} \times n \times \sigma^2 = \frac{\sigma^2}{n} \end{aligned} \]

The Law of Iterated Expectations

\[ \mathop{\mathrm{E}}[Y] = \mathop{\mathrm{E}}\qty[ \mathop{\mathrm{E}}( Y \mid X ) ] \quad \text{ for any random variables $X, Y$} \]

| \(p\) | \(W_i\) | \(\mathop{\mathrm{E}}[Y_i \mid W_i]\) |

|---|---|---|

| \(\frac{3}{6}\) | \(0\) | \(1\) |

| \(\frac{3}{6}\) | \(1\) | \(1.25\) |

What is \(\mathop{\mathrm{E}}[Y_i]\)?

| \(p\) | \(W_i\) | \(\mathop{\mathrm{E}}[Y_i \mid W_i]\) |

|---|---|---|

| \(0.58\) | 0 | \(31.22K\) |

| \(0.42\) | 1 | \(72.35K\) |

What is \(\mathop{\mathrm{E}}[Y_i]\)?

Irrelevance of independent conditioning variables

\[ \mathop{\mathrm{E}}[ \textcolor[RGB]{17,138,178}{Y} \mid \textcolor[RGB]{17,138,178}{X}, \textcolor[RGB]{239,71,111}{X'} ] = \textcolor[RGB]{17,138,178}{\mathop{\mathrm{E}}[ Y \mid X ]} \quad \text{ when $X'$ is independent of $X,Y$ } \]

Application. When we sample with replacement, the conditional expectation of \(Y_i\) given \(W_1 \ldots W_n\) is the conditional mean of \(Y_i\) given \(W_i\) alone.

\[ \mathop{\mathrm{E}}[Y_i \mid W_1 \ldots W_n] = \mathop{\mathrm{E}}[Y_i \mid W_i] = \mu(W_i) \qqtext{ when $(W_1, Y_1) \ldots (W_n, Y_n)$ are independent.} \]

The Indicator Trick

\[ 1_{=w}(W)\mu(W) = \begin{cases} 1 \times \mu(W) & \text{ when } W=w \\ 0 \times \mu(W) & \text{ when } W \neq w \end{cases} = \begin{cases} 1 \times \mu(w) & \text{ when } W=w \\ 0 \times \mu(w) & \text{ when } W \neq w \end{cases} = 1_{=w}(W)\mu(w) \]

Application.

\[ \mathop{\mathrm{E}}[1_{=w}(W)\mu(W)] = \mathop{\mathrm{E}}[ 1_{W=w} \mu(w) ] = \mu(w) \mathop{\mathrm{E}}[1_{=w}(W)] = \mu(w) \times P(W=w) \]

Unbiasedness

A Sample Mean is Unbiased

Claim. When we sample uniformly-at-random, the sample mean is an unbiased estimator of the population mean. \[ \mathop{\mathrm{E}}[\hat\mu] = \mu \]

We proved this earlier today using linearity of expectations. See Section 22.2.1.

A Subsample Mean is Conditionally Unbiased

Claim. When we sample with replacement, a subsample mean is a conditionally unbiased estimator of the corresponding subpopulation mean as long as there’s at least one person with \(W_i=1\) \[ \mathop{\mathrm{E}}[\hat\mu(1) \mid W_1 \ldots W_n] = \mu(1) \quad \text{ if } \quad \sum_{i=1}^{n} 1_{=1}(W_i) > 0. \]

Differences in Subsample Means

Claim. A difference in subsample means is conditionally unbiased for the corresponding difference in subpopulation means as long as there sample includes at least one person in each group.

\[ \mathop{\mathrm{E}}[\hat\mu(1) - \hat\mu(0) \mid W_1 \ldots W_n] = \mu(1) - \mu(0) \quad \text{ if } \quad \sum_{i=1}^{n} 1_{=1}(W_i) > 0 \ \text{ and } \ \sum_{i=1}^{n} 1_{=0}(W_i) > 0. \]

Why?

This follows from the linearity of expectations and conditional unbiasedness of the subsample means.

\[ \mathop{\mathrm{E}}[\hat\mu(1) - \hat\mu(0) \mid W_1 \ldots W_n] = \mathop{\mathrm{E}}[\hat\mu(1) \mid W_1 \ldots W_n] - \mathop{\mathrm{E}}[\hat\mu(0) \mid W_1 \ldots W_n] = \mu(1) - \mu(0) \]

Conditional vs. Unconditional Unbiasedness

Conditional unbiasedness without the ‘if’ implies unconditional unbiasedness. That’s the law of iterated expectations.

\[ \mathop{\mathrm{E}}[\hat\mu(1)] = \mathop{\mathrm{E}}\qty[\mathop{\mathrm{E}}[\hat\mu(1) \mid W_1 \ldots W_n]] = \mathop{\mathrm{E}}[\mu(1)] = \mu(1) \qif \mathop{\mathrm{E}}[\hat\mu(1) \mid W_1 \ldots W_n] = \mu(1) \]

But the ‘if’ is a problem. Fundamentally, the problem is that if there’s nobody with \(W_i=1\), there isn’t a subsample mean for that group. There’s nobody in the subsample to average. The best we could do is just make something up. However, when our sample is large, it’s very unlikely that there’s nobody in the subsample for any group, so if we make up anything at all reasonable, we’ll get an estimator that’s pretty close to unbiased.

\[ \begin{aligned} \mathop{\mathrm{E}}[\hat\mu(1)] &= \mathop{\mathrm{E}}\qty[\mathop{\mathrm{E}}[\hat\mu(1) \mid W_1 \ldots W_n]] \\ &= \sum_{\substack{x_1 \ldots x_n \\ \sum_{i=1}^{n} 1_{=1}(x_i) > 0}} \mu(1) \times P(W_1=x_1, \ldots, W_n=x_n) \\ &+ \sum_{\substack{x_1 \ldots x_n \\ \sum_{i=1}^{n} 1_{=1}(x_i) = 0}} \text{whatever} \times P(W_1=x_1, \ldots, W_n=x_n) \\ &= (1-p_0) \ \mu(1) \ + \ p_0 \ \text{whatever} \qfor p_0 = P(W_i=0 \text{ for all } i) \\ &\approx \mu(1) \qif p_0 \approx 0 \end{aligned} \]

For this reason, we’ll mostly ignore this issue from here on. We’ll call subsample means unbiased estimators of subpopulation means because they’re close enough, but you should be aware that there is a subtle issue here.

Why Unconditional Unbiasedness is Impossible

It’s not just that we haven’t found an unconditionally unbiased estimator—there isn’t one. No matter how clever we are about what to do when a group is empty, we can’t achieve unconditional unbiasedness.

Here’s why. Consider estimating \(\mu(1)\). Any estimator \(\hat\mu(1)\) we construct is a function of our data \((W_1, Y_1) \ldots (W_n, Y_n)\). When \(N_1 = 0\)—when nobody in our sample has \(W_i = 1\)—we have no observations from the group with \(W=1\). Our estimator can only depend on observations from the other group.

But the subpopulation mean \(\mu(1)\) could be anything. It’s a feature of the green subpopulation, which we haven’t seen at all when \(N_1 = 0\). For our estimator to be unbiased, we’d need \[ \mathop{\mathrm{E}}[\hat\mu(1)] = \mu(1) \qqtext{for all possible values of } \mu(1). \] When \(N_1 = 0\), our estimator \(\hat\mu(1)\) doesn’t depend on \(\mu(1)\) at all—it’s determined entirely by the red observations. So \(\mathop{\mathrm{E}}[\hat\mu(1) \mid N_1 = 0]\) is some fixed number that doesn’t change with \(\mu(1)\). But we need it to equal \(\mu(1)\), which does change. That’s impossible.

More formally: let \(c = \mathop{\mathrm{E}}[\hat\mu(1) \mid N_1 = 0]\). Then \[ \mathop{\mathrm{E}}[\hat\mu(1)] = P(N_1 > 0) \cdot \mu(1) + P(N_1 = 0) \cdot c \] For this to equal \(\mu(1)\) for all \(\mu(1)\), we’d need \(P(N_1 = 0) = 0\). But with ordinary sampling, there’s always some chance—however small—that everyone in our sample comes from the same group.

This is why stratified sampling matters. With stratified sampling, we choose to sample \(n_1 > 0\) people from the green group. We guarantee \(N_1 = n_1 > 0\) by design. The “what if nobody shows up” problem disappears, and we get unconditional unbiasedness for free.

Implications of Unbiasedness

- If we can estimate the width of its sampling distribution, we can get interval estimates with the coverage we want.

- The question that remains is whether those interval estimates are narrow enough to be useful.

Spread

The Question

Spread Within and Between Groups

- You can think of there being two kinds of variation in a population.

- Within-group variation.

- That’s illustrated above by the ‘arms’ on our subpopulation means in the plot above.

- The arm length is, in fact, the subpopulation standard deviation.

- That’s the square root of the conditional variance function \(\sigma^2(x) = \mathop{\mathrm{\mathop{\mathrm{V}}}}[Y \mid W=x]\).

- Between-group variation.

- That’s about the difference between those subsample means.

- One reasonable summary is the standard deviation of the subpopulation means \(\mu(x) = \mathop{\mathrm{E}}[Y \mid W=x]\).

The Law of Total Variance

\[ \mathop{\mathrm{\mathop{\mathrm{V}}}}[Y] = \mathop{\mathrm{E}}\qty{\mathop{\mathrm{\mathop{\mathrm{V}}}}( Y \mid X ) } + \mathop{\mathrm{\mathop{\mathrm{V}}}}\qty{\mathop{\mathrm{E}}( Y \mid X ) } \]

- The Law of Total Variance breaks Variance into within-group and between-group terms.

- It’s like the Law of Iterated Expectations, but for Variance.

- It’s a useful way to decompose the variance of a random variable.

- Think about where most of the variance in \(Y\) is coming from in the two populations shown above.

We won’t prove this, but it’s in the slides if you’re interested. See Chapter 25.

Check Your Understanding

- Try to put the three terms into words.

- \(\mathop{\mathrm{\mathop{\mathrm{V}}}}[Y]\) is the …

- \(\mathop{\mathrm{E}}\qty{ \mathop{\mathrm{\mathop{\mathrm{V}}}}(Y \mid W) }\) is the …

- \(\mathop{\mathrm{\mathop{\mathrm{V}}}}\qty{\mathop{\mathrm{E}}( Y \mid W)}\) is the …

- Do this in two ways.

- Using ‘Random Variables Language’,

- e.g. ‘the expected value of the conditional variance of Y given X’

- Using ‘Population Language’

- e.g. ‘the variance of income (Y) within the random subpopulation with degree status X’

- Using ‘Random Variables Language’,

The Variance of the Sample Mean

Claim. When we sample w/ replacement, the variance of the sample mean

is the population variance divided by the number of people in the sample.

\[ \mathop{\mathrm{\mathop{\mathrm{V}}}}[\hat\mu] = \frac{\sigma^2}{n} \]

- We proved this earlier today using. See Section 22.2.2.

- We used, among other things, the property that expectations of products factor into products of expectations.

- Our proof for the subsample mean will be similar with more conditioning.

- We’ll use a conditional version of that we can derive using the ‘law of iterated iterated expectations’.

Conditional Expectations of Products Factor

When we sample with replacement, the conditional expectation of products factors into a product of conditional expectations.

\[ \mathop{\mathrm{E}}[Y_i \ Y_j \mid W_1 \ldots W_n] = \mathop{\mathrm{E}}[Y_i \mid W_i]\mathop{\mathrm{E}}[Y_j \mid W_j] = \mu(W_i)\mu(W_j) \qqtext{ when $(W_1, Y_1) \ldots (W_n, Y_n)$ are independent.} \]

This is a subtle application of two of our ‘laws’. \[ \begin{aligned} &\mathop{\mathrm{E}}[Y \mid W] = \mathop{\mathrm{E}}[ \mathop{\mathrm{E}}\qty[Y \mid W, X] \mid W] \quad \text{ for any random variables $W, X, Y$} && \text{Law of Iterated Iterated Expectations} \\ &\mathop{\mathrm{E}}[ \textcolor[RGB]{17,138,178}{Y} \mid \textcolor[RGB]{17,138,178}{X}, \textcolor[RGB]{239,71,111}{X'} ] = \textcolor[RGB]{17,138,178}{\mathop{\mathrm{E}}[ Y \mid X ]} \quad \text{ when $X'$ is independent of $X,Y$ } && \text{Irrelevance of Independent Conditioning Variables} \end{aligned} \]

The Variance of the Subsample Mean

Claim. When we sample with replacement, the variance of a subsample mean is the

expected value of the subpopulation variance divided by the number of people in the subsample. \[

\mathop{\mathrm{\mathop{\mathrm{V}}}}[\hat\mu(1)] = \mathop{\mathrm{E}}\qty[ \frac{\sigma^2(1)}{N_1} ] \quad \text{ for } \quad N_1 = \sum_{i=1}^n 1_{=1}(W_i)

\]

- This is like what we saw in the unconditional case, but with a twist.

- The number of people in the subsample is random, so instead of \(1/n\), we have \(\mathop{\mathrm{E}}[ \frac{1}{N_1} ]\).

Step 1. Total Variance

- We’ll start by using the Law of Total Variance, conditioning on \(W_1 \ldots W_n\).

- And then we’ll have to calculate two terms.

- The expected value of the conditional variance

- The variance of the conditional expectation \[ \begin{aligned} \mathop{\mathrm{\mathop{\mathrm{V}}}}\qty[ \hat \mu(1) ] &= \underset{\color[RGB]{64,64,64}\text{within-groups term}}{\mathop{\mathrm{E}}\qty[ \mathop{\mathrm{\mathop{\mathrm{V}}}}\qty{ \hat \mu(1) \mid W_1 \ldots W_n }] } + \underset{\color[RGB]{64,64,64}\text{between-groups term}}{\mathop{\mathrm{\mathop{\mathrm{V}}}}\qty[ \mathop{\mathrm{E}}\qty{\hat \mu(1) \mid W_1 \ldots W_n} ] } \\ \end{aligned} \]

- This is one place it helps to know our estimator is conditionally unbiased.

- That conditional expectation is the constant \(\mu(1)\).1

- So the ‘between-groups’ variance term is zero.

Step 2. Centering and Squaring

\[ \begin{aligned} \mathop{\mathrm{\mathop{\mathrm{V}}}}\qty[ \hat \mu(1) \mid W_1 \ldots W_n ] &\overset{\texttip{\small{\unicode{x2753}}}{Definitionally.}}{=} \mathop{\mathrm{E}}\qty[ \qty{\hat \mu(1) - \mu(1) }^2 \mid W_1 \ldots W_n ] \\ &\overset{\texttip{\small{\unicode{x2753}}}{Also definitionally.}}{=} \mathop{\mathrm{E}}\qty[ \qty{\frac{\sum_{i=1}^n 1_{=1}(W_i)Y_{i}}{\sum_{i=1}^n 1_{=1}(W_i)} - \mu(1)}^2 \mid W_1 \ldots W_n ] \\ &\overset{\texttip{\small{\unicode{x2753}}}{Multiplying $\mu(1)$ by $N_1/N_1$ to get a common denominator}}{=} \mathop{\mathrm{E}}\qty[ \qty{ \frac{\sum_{i=1}^n 1_{=1}(W_i){\textcolor[RGB]{0,0,255}{\qty{Y_{i} - \mu(1)}}}}{\sum_{i=1}^n 1_{=1}(W_i)}}^2 \mid W_1 \ldots W_n ] \\ &\overset{\texttip{\small{\unicode{x2753}}}{The indicator trick tells us the factors we've highlighted in \textcolor[RGB]{0,0,255}{blue} are the same.}}{=} \mathop{\mathrm{E}}\qty[ \qty{ \frac{\sum_{i=1}^n 1_{=1}(W_i)\textcolor[RGB]{0,0,255}{Z_i}}{\sum_{i=1}^n 1_{=1}(W_i)}}^2 \mid W_1 \ldots W_n ] \qfor \textcolor[RGB]{0,0,255}{Z_i = Y_{i} - \mu(W_i)} \\ &\overset{\texttip{\small{\unicode{x2753}}}{Expanding the square.}}{=} \mathop{\mathrm{E}}\qty[ \frac{\textcolor[RGB]{239,71,111}{\sum_{i=1}^n}\textcolor[RGB]{17,138,178}{\sum_{j=1}^n} \textcolor[RGB]{239,71,111}{1_{=1}(W_i)Z_i} \ \textcolor[RGB]{17,138,178}{1_{=1}(W_j)Z_j}}{\qty{\sum_{i=1}^n 1_{=1}(W_i) }^2} \mid W_1 \ldots W_n ] \\ &\overset{\texttip{\small{\unicode{x2753}}}{Distributing the expectation. We can pull out the denominator and the indicator in each term because they're functions of $W_1 \ldots W_n$.}}{=} \frac{\textcolor[RGB]{239,71,111}{\sum_{i=1}^n}\textcolor[RGB]{17,138,178}{\sum_{j=1}^n} \textcolor[RGB]{239,71,111}{1_{=1}(W_i)} \textcolor[RGB]{17,138,178}{1_{=1}(W_j)}\mathop{\mathrm{E}}\qty[\textcolor[RGB]{239,71,111}{Z_i} \textcolor[RGB]{17,138,178}{Z_j} \mid W_1 \ldots W_n] }{\qty{\sum_{i=1}^n 1_{=1}(W_i) }^2} \end{aligned} \]

This random variable \(Z_i\) is like the one we have in the unconditional case, but conditionally.

\[ \begin{aligned} \mathop{\mathrm{E}}[ Z_i \mid W_i ] &= \mathop{\mathrm{E}}[ Y_i - \mu(W_i) \mid W_i ] = \mathop{\mathrm{E}}[ Y_i \mid W_i ] - \mu(W_i) = \mu(W_i) - \mu(W_i) && \overset{\texttip{\small{\unicode{x2753}}}{It has conditional expectation zero. }}{\ } \\ \mathop{\mathrm{E}}[ Z_i^2 \mid W_i] &= \mathop{\mathrm{E}}[ \{ Y_i - \mathop{\mathrm{E}}[Y_i \mid W_i] \}^2 \mid W_i] = \mathop{\mathrm{\mathop{\mathrm{V}}}}[Y_i \mid W_i] && \overset{\texttip{\small{\unicode{x2753}}}{The conditional expectation of its square is the conditional variance of $Y_i$ }}{\ } \end{aligned} \]

Step 3. Taking Expectations Term-by-Term

Squared Terms (\(i=j\))

\[ \begin{aligned} \mathop{\mathrm{E}}\qty[\textcolor[RGB]{239,71,111}{Z_i}\textcolor[RGB]{17,138,178}{Z_j} \mid W_1 \ldots W_n] &\overset{\texttip{\small{\unicode{x2753}}}{Because $i=j$}}{=} \mathop{\mathrm{E}}\qty[ Z_i^2 \mid W_1 \ldots W_n] \\ &\overset{\texttip{\small{\unicode{x2753}}}{Because $X' = (W_1 \ldots W_n \text{except} W_i)$ is independent of $W_i$ and $Y_i$.}}{=} \mathop{\mathrm{E}}\qty[ Z_i^2 \mid W_i] \\ &\overset{\texttip{\small{\unicode{x2753}}}{Definitionally}}{=}\mathop{\mathrm{\mathop{\mathrm{V}}}}[Y_i \mid W_i] = \sigma^2(W_i) \end{aligned} \]

Cross-Terms (\(i\neq j\))

\[ \begin{aligned} \mathop{\mathrm{E}}\qty[\textcolor[RGB]{239,71,111}{Z_i} \textcolor[RGB]{17,138,178}{Z_j} \mid W_1 \ldots W_n] &\overset{\texttip{\small{\unicode{x2753}}}{This is the factorization identity we proved a few slides back.}}{=}\textcolor[RGB]{239,71,111}{\mathop{\mathrm{E}}\qty[Z_i \mid W_i]} \textcolor[RGB]{17,138,178}{\mathop{\mathrm{E}}\qty[Z_j \mid W_j]} \\ &\overset{\texttip{\small{\unicode{x2753}}}{Definitionally}}{=} 0 \times 0 \end{aligned} \]

Step 4. Putting the Pieces Together

\[ \begin{aligned} \mathop{\mathrm{\mathop{\mathrm{V}}}}\qty[ \hat \mu(1) \mid W_1 \ldots W_n ] &\overset{\texttip{\small{\unicode{x2753}}}{Step 1}}{=} \underset{\color[RGB]{64,64,64}\text{within-groups term}}{\mathop{\mathrm{E}}\qty[ \mathop{\mathrm{\mathop{\mathrm{V}}}}\qty{ \hat \mu(1) \mid W_1 \ldots W_n }] } + \underset{\color[RGB]{64,64,64}\text{between-groups term}}{ 0 } \\ &\overset{\texttip{\small{\unicode{x2753}}}{Step 2}}{=} \mathop{\mathrm{E}}\qty[\frac{\textcolor[RGB]{239,71,111}{\sum_{i=1}^n}\textcolor[RGB]{17,138,178}{\sum_{j=1}^n} \textcolor[RGB]{239,71,111}{1_{=1}(W_i)} \textcolor[RGB]{17,138,178}{1_{=1}(W_j)}\mathop{\mathrm{E}}\qty[\textcolor[RGB]{239,71,111}{Z_i} \textcolor[RGB]{17,138,178}{Z_j} \mid W_1 \ldots W_n] }{\qty{\sum_{i=1}^n 1_{=1}(W_i) }^2}] + 0 \\ &\overset{\texttip{\small{\unicode{x2753}}}{Step 3}}{=} \mathop{\mathrm{E}}\qty[\frac{\textcolor[RGB]{239,71,111}{\sum_{i=1}^n 1_{=1}(W_i)} \ \sigma^2(W_i) }{\qty{\sum_{i=1}^n 1_{=1}(W_i) }^2}] \\ &\overset{\texttip{\small{\unicode{x2753}}}{The indicator trick again.}}{=} \mathop{\mathrm{E}}\qty[\frac{\textcolor[RGB]{239,71,111}{\sum_{i=1}^n 1_{=1}(W_i)} \ \sigma^2(1) }{\qty{\sum_{i=1}^n 1_{=1}(W_i) }^2}] \\ &\overset{\texttip{\small{\unicode{x2753}}}{Cancelling a common factor of $N_1$ in the numerator and denominator.}}{=} \mathop{\mathrm{E}}\qty[\frac{\sigma^2(1)}{\sum_{i=1}^n 1_{=1}(W_i) } ] \end{aligned} \]

The Variance of Differences in Means

Claim. The variance of the difference in subsample means is the sum of the variances of the subsample means. \[ \mathop{\mathrm{\mathop{\mathrm{V}}}}[\hat\mu(1) - \hat\mu(0)] = \mathop{\mathrm{E}}\qty[ \frac{\sigma^2(1)}{N_1} + \frac{\sigma^2(0)}{N_0}] \quad \text{ for } \quad N_x = \sum_{i=1}^n 1_{=x}(W_i) \]

This’ll be a Homework Exercise.

Estimating the Variance

\[ \mathop{\mathrm{\mathop{\mathrm{V}}}}[\hat\mu(1) - \hat\mu(0)] = \mathop{\mathrm{E}}\qty[ \frac{\sigma^2(1)}{N_1} + \frac{\sigma^2(0)}{N_0}] \quad \text{ for } \quad N_x = \sum_{i=1}^n 1_{=x}(W_i) \]

- This formula includes a few things we don’t know.

- We don’t know the subpopulation standard deviations \(\sigma(0)\) or \(\sigma(1)\).

- Those are drawn in as ‘arms’ on the subpopulation means in the plot on the left.

- That’s a fake-data plot. It’s an illustration of data we don’t really get to see.

- We also don’t know the expected values of \(1/N_1\) or \(1/N_0\).

- We’d need to know some things about the population to calculate those, too.

- In particular, we’d need to know the frequency that \(x_j=1\) in our population.

- What we can do is use our sample to estimate all of those tings.

- We can use the subsample standard deviations \(\hat\sigma(0)\) or \(\hat\sigma(1)\).

- Those are drawn in as ‘arms’ on the subsample means in the plot on the right.

- That’s a plot of the data we have. Our sample. And they’re about the same length.

- We can use the \(1/N_1\) and \(1/N_0\) themselves to estimate their expected values.

- And we can plug those into our variance formula to get an estimate of this variance.

\[ \widehat{\mathop{\mathrm{\mathop{\mathrm{V}}}}}[\hat\mu(1) - \hat\mu(0)] = \frac{\hat{\sigma}^2(1)}{N_1} + \frac{\hat{\sigma}^2(0)}{N_0} \qfor \hat \sigma^2(x) = \frac{1}{N_x} \sum_{i:W_i=x} (Y_i - \hat \mu(x))^2 \]

Case Study

The NSW Experiment

Code

nsw.data = read.csv('../data/nsw.csv')

nsw = nsw.data |>

filter(dataset=='nsw')

X = as.numeric(nsw$treated)

Y = as.numeric(nsw$income78)

n = length(Y)library(purrr)

difference = mean(Y[X==1]) - mean(Y[X==0])

difference.bootstrap.samples = map_vec(1:10000, function(.) {

I = sample(1:n, n, replace=TRUE)

Xstar = X[I]

Ystar = Y[I]

mean(Ystar[Xstar==1]) - mean(Ystar[Xstar==0])

})w95.boot = width(difference.bootstrap.samples, alpha=.05)

nsw.interval = difference + c(-1,1)*w95.boot/2The National Supported Work Demonstration

| \(x\) | \(N_x\) | \(\hat \mu(x)\) | \(\hat \sigma(x)\) |

|---|---|---|---|

| 0 | 260 | 4600 | 5500 |

| 1 | 185 | 6300 | 7900 |

- The National Supported Work Demonstration was an experimental program in the 1970s that provided job training and other services.

- Here ‘experimental’ doesn’t just mean untested. It was run as a big experiment.

- From a population of eligible people, they drew a sample of size 445 to participate.

- To help estimate the impact of the program, participants were randomized into two groups.

- The ‘treated’ group received the training and services.

- The ‘control’ group did not.

- A year or two after the program ended, they compared the incomes of the two groups.

- And the difference within the sample was about $1800 per year. In 1978 dollars.

- That’s pretty good. A dollar in 1978 was worth about what $4.80 is today.

- So adjusting for inflation, that’s an in-sample difference of about $8600. Huge impact.

- But there’s problem. It’s not clear that this describes a real population-level difference.

- The sample was relatively small. And, as a result, their interval estimate was pretty wide.

\[ \begin{aligned} \mu(1)-\mu(0) &\in 1800 \pm 1300 \approx [500, \ 3100] &&\qqtext{is a 95\% confidence interval in 1978 dollars} \\ &\in 8600 \pm 6300 \approx [2300, \ 14900] &&\qqtext{adjusted for inflation} \end{aligned} \]

- This was an expensive program to run.

- If the population difference were at the low end of the interval, at 500 1978 dollars, it wouldn’t be cost-effective.

- If the population difference were at the high end of the interval, at 3100 1978 dollars, it would be.

- To figure out if it’s worth running, we need a more conclusive result.

Activities

| \(x\) | \(N_x\) | \(\hat \mu(x)\) | \(\hat \sigma(x)\) |

|---|---|---|---|

| 0 | 260 | 4600 | 5500 |

| 1 | 185 | 6300 | 7900 |

Appendix: Additional Figures

Appendix: Proving the Law of Total Variance

Starting Point: Interpreting Variance as Excess

\[ \small{ \begin{aligned} \mathop{\mathrm{\mathop{\mathrm{V}}}}(Y) &= \mathop{\mathrm{E}}\qty[ \qty{ Y - \mathop{\mathrm{E}}(Y) }^2 ] && \text{ the \emph{spread} idea: the mean square of a \emph{centered version} of $Y$ } \\ &= \mathop{\mathrm{E}}\qty(Y^2) - \qty{\mathop{\mathrm{E}}\qty(Y)}^2 && \text{ the \emph{excess} idea: the average amount $Y^2$ \emph{exceeds} the square of its mean}. \end{aligned} } \]

Let’s think, in terms of the plot, about why there is excess in the square.

- Suppose \(Y\) takes on three values — the dots —with equal probability.

- Its expectation is the average height of the black dots.

- And its expectation, squared, is the square of this.

- Its square \(Y^2\) takes on the the square of those values—the squares — with equal probability.

- Its expectation is the average height of the squares.

- Why is the second one bigger?

- Because squaring increases big draws of Y more than it decreases small ones.

- Visually, the line from \(Y\) to \(Y^2\) is longer when \(Y\) is bigger than when it’s smaller.

- We’ll mostly think about variance in terms of spread, but the ‘excess’ formula comes up in calculations.

Equivalence Proof

Claim. The spread and excess formulas for variance are equivalent. \[ \mathop{\mathrm{E}}\qty[ \qty{ Y - \mathop{\mathrm{E}}(Y) }^2 ] =\mathop{\mathrm{E}}[ Y^2] - \qty{\mathop{\mathrm{E}}(Y)}^2 \]

\[ \small{ \begin{aligned} \mathop{\mathrm{E}}\qty[ \qty{ Y - \mathop{\mathrm{E}}(Y) }^2 ] &= \mathop{\mathrm{E}}\qty[ Y^2 - 2 Y \mathop{\mathrm{E}}(Y) + \qty{\mathop{\mathrm{E}}(Y)}^2 ] && \text{ FOIL } \\ &= \mathop{\mathrm{E}}[ Y^2] - \mathop{\mathrm{E}}\qty[2 Y \mathop{\mathrm{E}}(Y)] + \mathop{\mathrm{E}}\qty[\qty{\mathop{\mathrm{E}}(Y)}^2] && \text{Distributing expectations (linearity)} \\ &= \mathop{\mathrm{E}}[ Y^2] - 2\mathop{\mathrm{E}}(Y)\mathop{\mathrm{E}}\qty[Y] + \qty{\mathop{\mathrm{E}}(Y)}^2\mathop{\mathrm{E}}[1] && \text{Pulling constants out of expectations (linearity)} \\ &= \mathop{\mathrm{E}}[ Y^2] - 2\qty{\mathop{\mathrm{E}}(Y)}^2 + \qty{\mathop{\mathrm{E}}(Y)}^2] && \text{Recognizing a product of something with itself as a square} \\ &= \mathop{\mathrm{E}}[ Y^2] - \cancel{2}\qty{\mathop{\mathrm{E}}(Y)}^2 + \cancel{\qty{\mathop{\mathrm{E}}(Y)}^2}] && \text{Subtracting} \end{aligned} } \]

Conditional Variance as Excess

\[ \small{ \begin{aligned} \mathop{\mathrm{\mathop{\mathrm{V}}}}(Y \mid W) &= \mathop{\mathrm{E}}\qty[ \qty{ Y - \mathop{\mathrm{E}}(Y\mid W)}^2 \mid X ] && \text{ the \emph{spread} idea: the mean square of a conditionally centered version of $Y$ } \\ &= \mathop{\mathrm{E}}\qty(Y^2 \mid W) - \qty{\mathop{\mathrm{E}}\qty(Y \mid W)}^2 && \text{ the \emph{excess} idea: the average amount $Y^2$ exceeds the square of its cond. mean} \end{aligned} } \]

- We can think of the Conditional Variance as excess, too.

- It’s the same as the unconditional case, but within subpopulations.

Proof

Claim. \[ \mathop{\mathrm{\mathop{\mathrm{V}}}}[Y] = \textcolor{blue}{\mathop{\mathrm{E}}\qty[\mathop{\mathrm{\mathop{\mathrm{V}}}}(Y \mid W)]} + \textcolor{green}{\mathop{\mathrm{\mathop{\mathrm{V}}}}\qty[\mathop{\mathrm{E}}(Y \mid W)]} \]

- Rewrite the variances, conditional and unconditional, in excess form.

\[ \mathop{\mathrm{\mathop{\mathrm{V}}}}[Y] = \textcolor{blue}{\mathop{\mathrm{E}}\qty[ \mathop{\mathrm{E}}(Y^2 \mid W) - \qty{\mathop{\mathrm{E}}\qty(Y \mid W)}^2]} + \textcolor{green}{\mathop{\mathrm{E}}\qty{ \mathop{\mathrm{E}}(Y \mid W)^2} - \qty(\mathop{\mathrm{E}}\qty{\mathop{\mathrm{E}}\qty(Y \mid W)})^2} \]

- Distribute the outer expectations in the first term.

\[ \mathop{\mathrm{\mathop{\mathrm{V}}}}[Y] = \textcolor{blue}{\mathop{\mathrm{E}}\qty[ \mathop{\mathrm{E}}(Y^2 \mid W)] - \mathop{\mathrm{E}}\qty[\qty{\mathop{\mathrm{E}}\qty(Y \mid W)}^2]} + \textcolor{green}{\mathop{\mathrm{E}}\qty[ \qty{\mathop{\mathrm{E}}(Y \mid W)^2} ] - \qty(\mathop{\mathrm{E}}\qty{\mathop{\mathrm{E}}\qty(Y \mid W)})^2} \]

- Cancel matching terms

\[ \mathop{\mathrm{\mathop{\mathrm{V}}}}[Y] = \textcolor{blue}{\mathop{\mathrm{E}}\qty[ \mathop{\mathrm{E}}(Y^2 \mid W)] \cancel{- \mathop{\mathrm{E}}\qty[\qty{\mathop{\mathrm{E}}\qty(Y \mid W)}^2]}} + \textcolor{green}{\cancel{\mathop{\mathrm{E}}\qty[ \qty{\mathop{\mathrm{E}}(Y \mid W)^2} ]} - \qty(\mathop{\mathrm{E}}\qty{\mathop{\mathrm{E}}\qty(Y \mid W)})^2} \]

- Use the law of iterated expectations to simplify the remaining terms.

\[ \mathop{\mathrm{\mathop{\mathrm{V}}}}[Y] = \textcolor{blue}{E[Y^2] \cancel{- \mathop{\mathrm{E}}\qty[\qty{\mathop{\mathrm{E}}\qty(Y \mid W)}^2]}} + \textcolor{green}{\cancel{\mathop{\mathrm{E}}\qty[ \qty{\mathop{\mathrm{E}}(Y \mid W)^2} ]} - (\mathop{\mathrm{E}}\qty{Y})^2} \]

We showed that on Section 22.2.1.↩︎